The

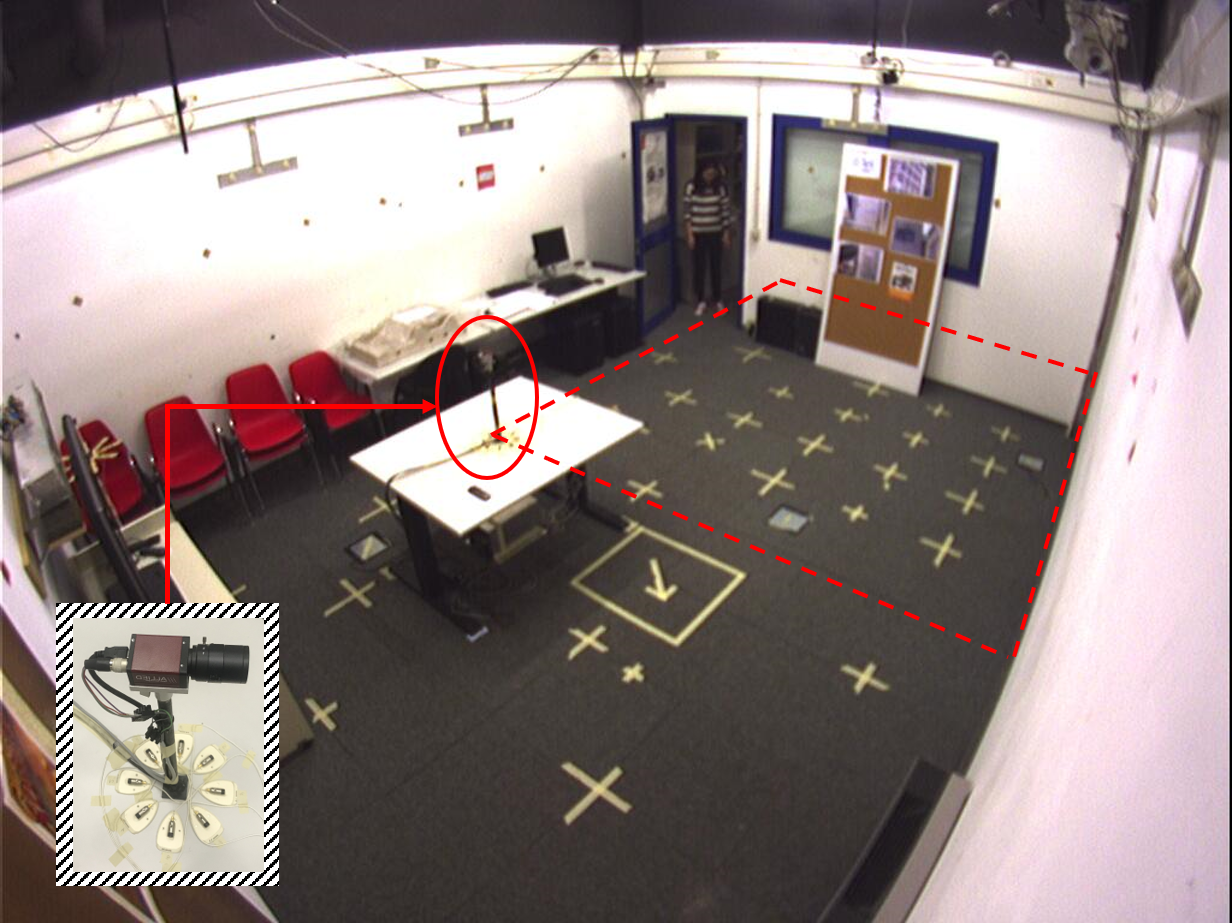

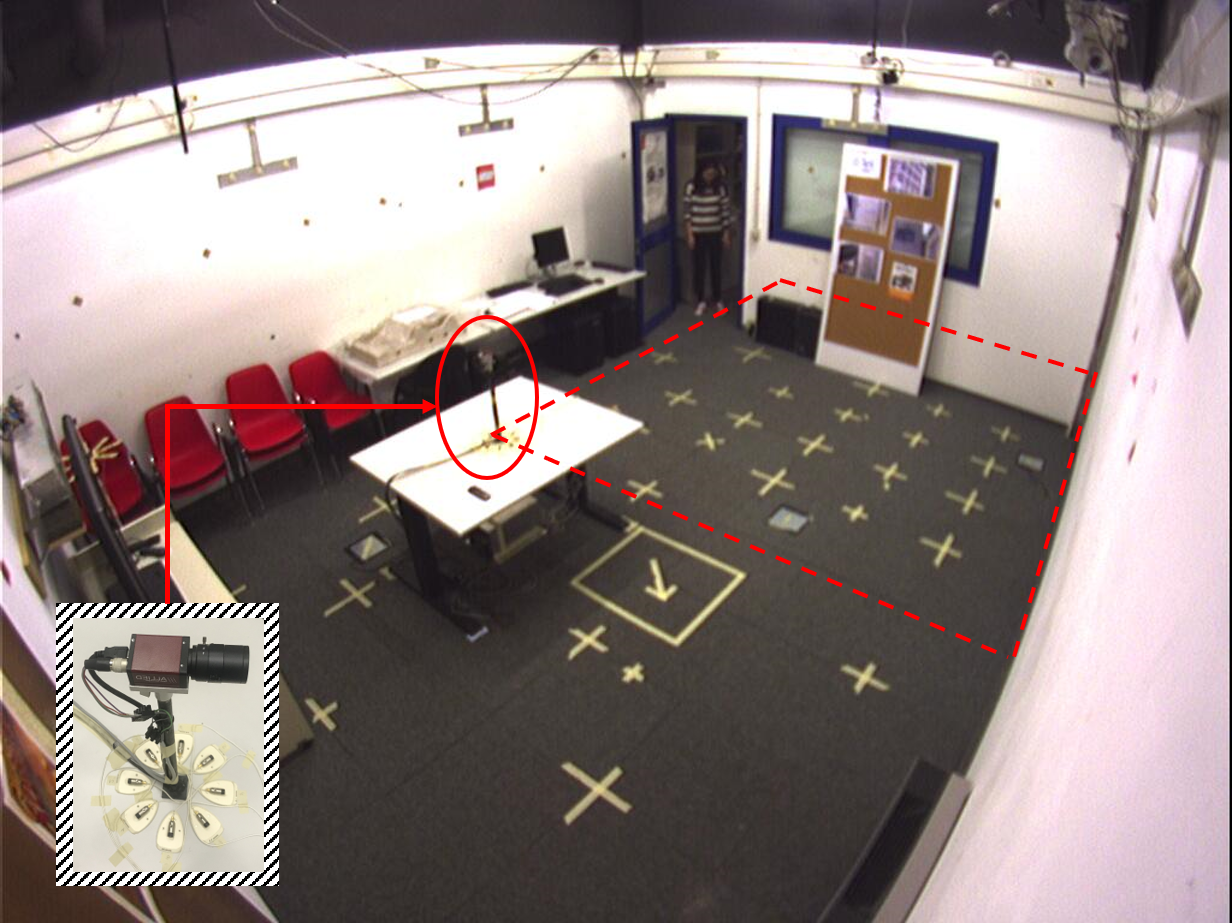

CAV3D (Co-located Audio-Visual streams with 3D tracks) dataset for 3D speaker tracking was collected with a sensing platform (Fig. 1) consisting of a monocular colour camera co-located with an 8-element circular microphone array. The audio sampling rate is 96 kHz. Video was recorded at 15 frames per second. The sensing platform was placed on a table in a room with dimensions 4.77 x 5.95 x 4.5 m and reverberation time of approximately 0.7s to record up to three simultaneous speakers. In addition to the sensing platform, 4 hardware-triggered CCD colour cameras installed at the top corners of the room recorded the scene. The dataset is synchronized, calibrated and annotated.

Fig. 1 Recording environment. The region surrounded by the red dashed line is within the camera’s field of view (approximately 90 degrees).

Scenarios and annotation

The dataset contains 20 sequences, whose duration ranges from 15s to 80s. Sequences can be categorized in three types:

- CAV3D-SOT: 9 sequences with a single speaker (seq6-13, seq20)

- CAV3D-SOT2: 6 sequences with a single active speaker and a second interfering person (not speaking) (seq14-19)

- CAV3D-MOT: 5 sequences with multiple simultaneous speakers (seq22-26)

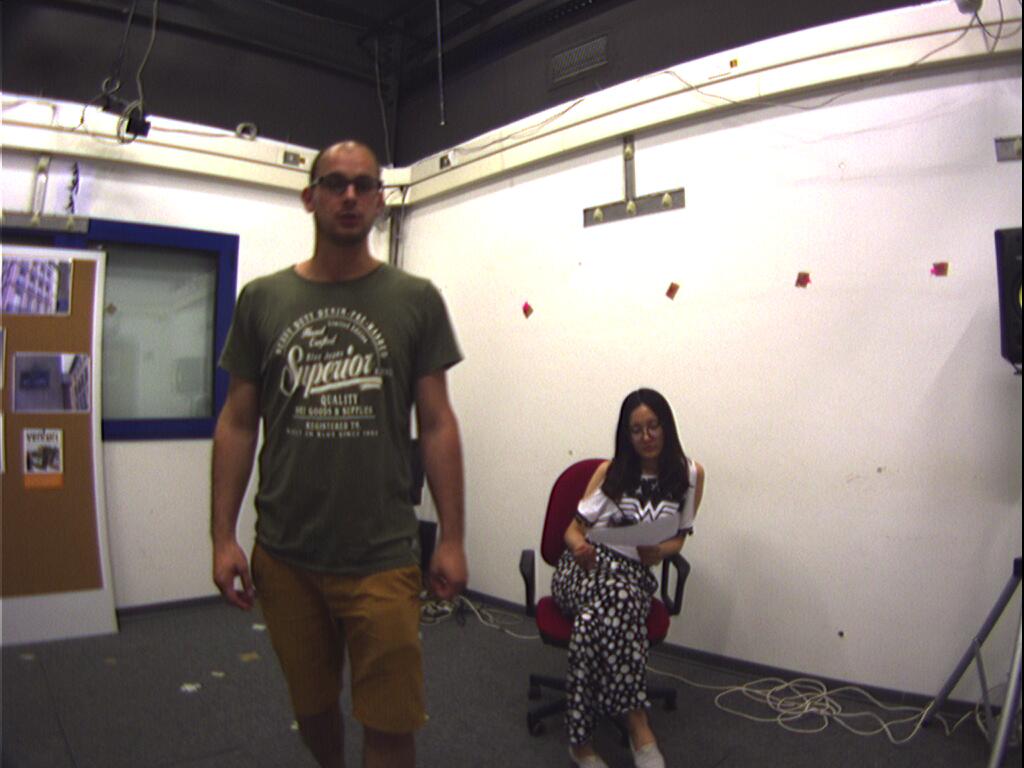

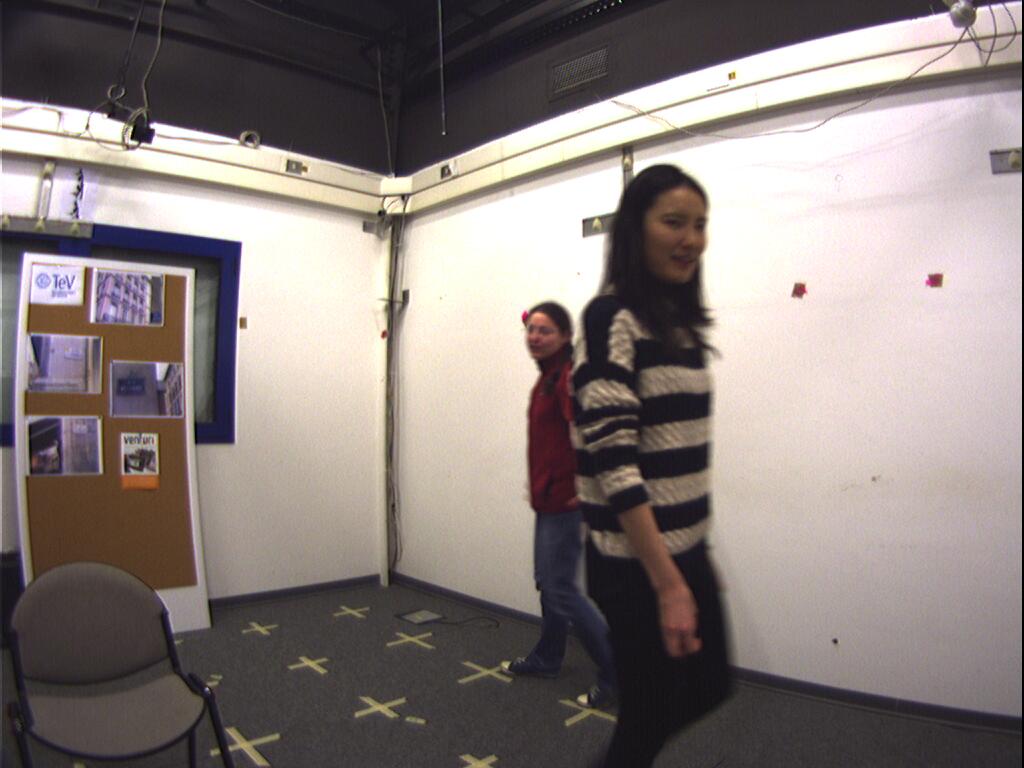

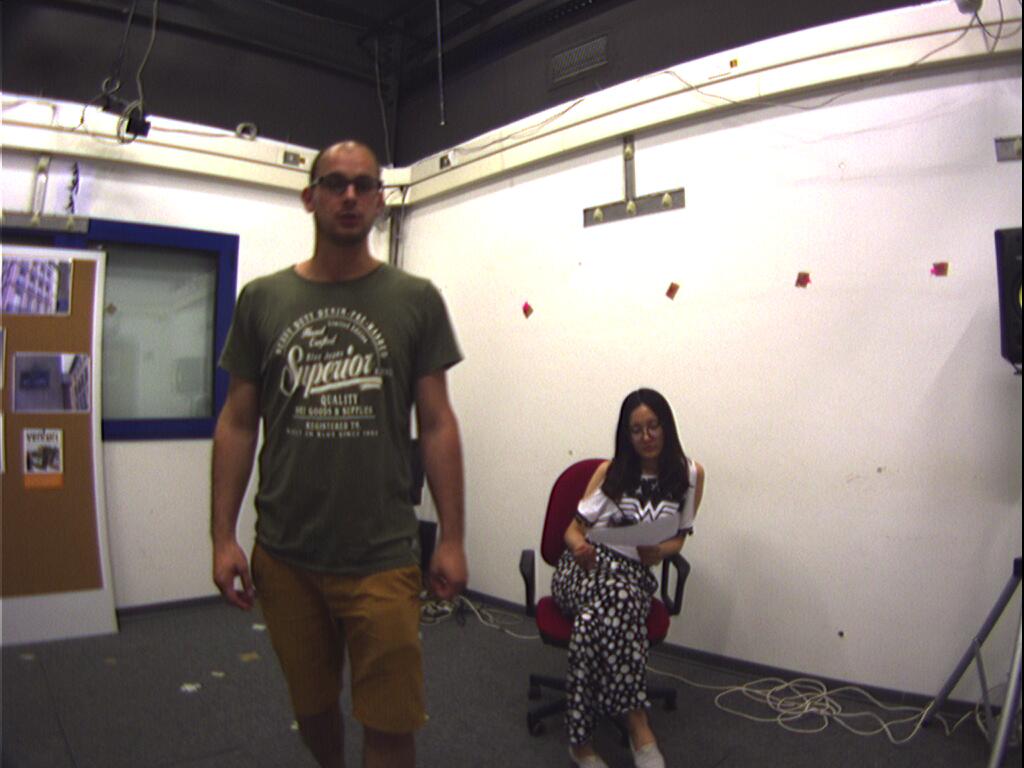

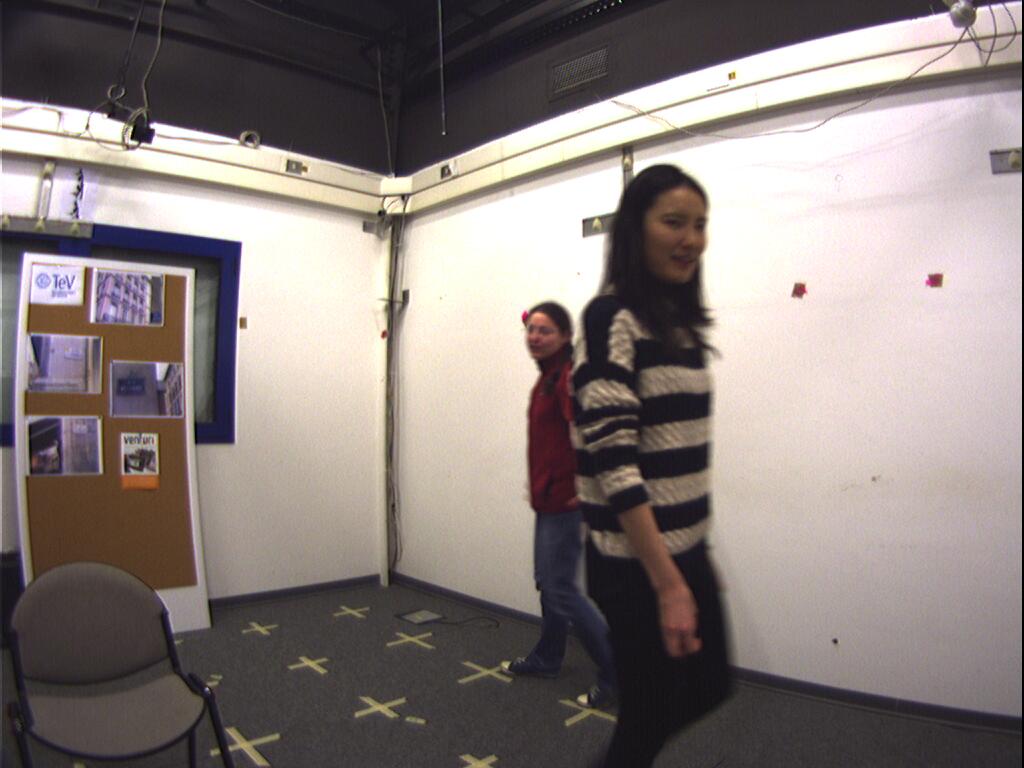

Figures 2 to 5 show some key frames. Speakers undergo abrupt direction changes, arrange objects, walk outside the camera’s FoV and often change their pose thus generating occlusions, non-frontal views and illumination changes. An air conditioner produces background noise that compounds with human-made noise (e.g. clapping and stomping).

|

|

| Figure 2 CAV3D-SOT2: seq18

|

Figure 3 CAV3D-MOT: seq24

|

|

|

| Figure 4 CAV3D-MOT: seq25

|

Figure 5 CAV3D-MOT: seq26

|

Sensors calibration.

The position of the microphones and the camera were measured independently but on a same world reference system, whose origin is in one corner of the room at floor level, the x and y axes are along the walls and the z-axis pointing upwards.

As for the the audio acquisition chain, the 3D location of each microphone in the world reference system was measured with cm precision. Pre-amp gains were tuned to ensure the same sensitivity and dynamics across different channels. We added a statement in Section V, where we also specified that the audio sampling accuracy is 24 bit. We used a professional audio card that ensures more than 20 bit effective resolution, which corresponds to digital input audio streams of very high quality.

The camera is positioned approximately 47.8 cm above the microphone array and was calibrated with standard procedures from OpenCV. This involved three steps (see the last paragraphs of Sec. V):

(

Step-1) Calibration of the camera’s

intrinsic parameters using a sequence of images observing a chessboard pattern printed on an A4 paper in different close-up poses in front of the camera. These images were processed by cv::findChessboardCorners to obtain a list image positions of the chessboard square corners for each image. These lists of image coordinates were then processed by cv::calibrateCamera to obtain focal length, principal point, and radial distortion coefficients

(

Step-2) The camera’s

extrinsic parameters (3D position and orientation) were computed as follows. After manually measuring the 3D position of a set of scene markers on the floor (yellow markers in Fig. 8) and on the walls (the corners of the T-shaped objects visible in Fig. 8). The 3D positions of these markers were measured in the world reference system (as done with the microphone 3D locations described above). Then, we have annotated the image coordinates of each of these scene markers on an image captured by the camera mounted at the co-located platform, hereby obtaining a set of 3D-2D point correspondences for extrinsic calibration. To estimate the camera pose in the room reference system from these correspondences we used cvFindExtrinsicCameraParams with Levenberg-Marquardt optimization.

(

Step-3) A multi-camera optimization (see last paragraph of Sec. V) is finally run to improve the accuracy of the camera calibration.

This calibration is then used for 3D mouth annotation where we run an optimization based on Sparse Bundle Adjustment to obtain 3D trajectories, accurate calibration, and an algorithmic correction of the manual annotations by minimizing the re-projection error on all camera views and sequences simultaneously.

Details about the annotation procedure are available in

Lanz et al, “ACCURATE TARGET ANNOTATION IN 3D FROM MULTIMODAL STREAMS”, ICASSP 2019

Audio-visual synchronization.

Clapping was used at the start and end of each recording. Synchronization was done manually by aligning the same clapping across audio and video streams.

Mouth positions on the image plane.

Manual annotation on the image plane at each frame from the five individual camera views. Frames from the same camera are displayed sequentially in a graphical user interface, with a superimposed 50 x 50 cropped image region centred at the position annotated at the previous frame. This provides a zoomed-in view of the candidate region on the frame, where the annotator can easily update the replicated mouth location with a mouse click.

Mouth positions in 3D.

Given the manually annotated mouth positions from the 5 viewpoints, using bundle adjustment we simultaneously refine the camera calibration parameters and the 3D points, according to an optimal criterion involving the corresponding image projections.

Voice activity labels.

The

transcriber software, an editor which allows transcribing the time markers and identities of individual segments, is used to label the speech/non-speech frames.